Critical summary review

In nineteen ninety six, the internet was a baby. Few households in the United States had a connection. The dial up modem screeched, pages took minutes to load, and nobody had any idea what it would all become. It was in that world, when the online universe was still learning to crawl, that the American Congress passed a law called Section two thirty of the Communications Decency Act. The idea was simple and, at the time, made perfect sense... if someone publishes something on a digital platform, the responsibility lies with the person who published it, not with the platform. Think of a noticeboard in a coffee shop. If someone pins an offensive note to the board, the shop owner does not get sued for it. He just provided the wall. That logic worked for nearly thirty years. Thanks to it, companies like Google, Facebook, YouTube and so many others were able to grow without the constant fear of being sued for everything their billions of users posted. Without that protection, the internet as we know it probably would not exist. Every comment, every video, every photo could have triggered a lawsuit against the company that hosted it. But what happens when the platform stops being just the wall and starts choosing which notes sit at the top, which ones get pushed to more people, and which ones stick in your head in a way you cannot stop reading?

That is the question two American juries answered in less than forty eight hours during the last week of March twenty twenty six.

In New Mexico, a jury found Meta, the owner of Facebook and Instagram, liable and ordered it to pay three hundred and seventy five million dollars to the state. The accusation was straightforward... the company misled consumers about the safety of its platforms and failed to protect children from sexual predators. The state's attorney general, Raúl Torrez, had filed the lawsuit in twenty twenty three after an undercover operation created a fake profile of a thirteen year old girl. The profile was flooded with approaches from predators. Jurors identified thousands of violations of the state's consumer protection law and applied the maximum penalty of five thousand dollars per violation. Even so, the amount fell well short of the two billion dollars prosecutors had sought. For perspective, Meta is valued at roughly one point five trillion dollars. Its shares rose five per cent after the verdict, a clear signal that financial markets, for now at least, did not see the bill as a real threat to the business.

One day later, in Los Angeles, another jury reached an even deeper conclusion. A twenty year old woman, identified only by the initials K.G.M., sued Meta and Google's YouTube alleging she became addicted to the platforms during her teenage years and that the addiction severely worsened her mental health, including depression. The jury agreed. It found both companies negligent in the design of their products and determined that this negligence was a substantial factor in the harm she suffered. Damages were set at six million dollars, with three million in compensatory damages, of which Meta bore seventy per cent and YouTube the remaining thirty. The jury also recommended an additional three million in punitive damages, concluding the companies had acted with malice.

Now, what makes these two cases so significant is not the size of the fines. It is the door they opened.

For decades, lawsuits against digital platforms crashed into the wall of Section two thirty and bounced back. The law shielded companies from liability over third party content, and courts almost always agreed. But the lawyers in these two cases used a different strategy. Instead of arguing that the content on the platforms caused harm, they argued that the design of the platforms itself was the problem. It was not what users posted. It was how the architecture of the product worked... infinite scroll, constant notifications, videos that start playing on their own, beauty filters, algorithms that prioritise content generating more engagement, even when that content is harmful. The lawyers treated Instagram and YouTube as defective products, in the same way someone sues a car manufacturer over a brake that fails.

This shift in framing, from content to conduct, is what allowed the cases to clear the Section two thirty barrier. Judges in both cases rejected requests from Meta and Google to dismiss the lawsuits on the basis of that legal protection. And the juries, after weeks of hearing testimony and reviewing internal company documents, sided with the plaintiffs. Gregory Dickinson, a law professor at the University of Nebraska, noted that courts are increasingly distinguishing claims about platform functionality from claims that would simply impose liability for third party speech.

The Los Angeles trial had dramatic moments. Mark Zuckerberg, Meta's chief executive, testified in person before the jury in February. Internal company documents presented during the proceedings revealed discussions about how to attract and retain young users. One of those documents estimated the lifetime value of a thirteen year old user to the company at around two hundred and seventy dollars. Another stated that the younger users start, the more likely they are to keep using the platform. Employees compared Instagram to drugs and slot machines in internal message exchanges. Meta's defence argued there is no definitive scientific proof that social media causes mental health problems, and that the company has invested in safety features for young users. Zuckerberg told the jury that keeping young users safe has always been a company priority.

This case was selected as a bellwether, a test case, within a bundle of approximately two thousand similar lawsuits filed by parents and school districts in California. Thousands more are proceeding through federal courts across the country. TikTok and Snapchat, which were also defendants in the Los Angeles case, settled with the plaintiff before the trial began. Meta and Google have both said they will appeal the verdicts.

And it is in the appeals that the real battle will unfold. So far, no appellate court in the United States has ruled on whether platform design decisions are protected by Section two thirty. Several trial courts have already said they are not, but it is appellate courts that create binding precedent, the kind that compels other courts to follow the same interpretation. If an appellate court upholds the verdicts, it could clear the path for a wave of similar rulings. Roblox, for instance, faces more than one hundred and thirty federal lawsuits accusing the gaming platform of failing to protect its users from sexual exploitation. Eric Goldman, co director of the High Tech Law Institute at Santa Clara University, put it bluntly... it is the internet that is on trial, not just social media.

The United States Supreme Court has already shown interest in the issue. In twenty twenty three, it heard a case involving YouTube but ultimately sidestepped a ruling on the limits of Section two thirty. In twenty twenty four, it declined to hear the appeal of a Texas teenager who had sued Snapchat. Two conservative justices, Clarence Thomas and Neil Gorsuch, dissented and wrote that social media platforms have increasingly used Section two thirty as a get out of jail free card. Meetali Jain, director of the Tech Justice Law Project, believes the Supreme Court may now be ready to take on a case like this.

The comparison many analysts are drawing is with the tobacco industry in the nineteen nineties. Back then, cigarette companies knew that nicotine was addictive and that tobacco killed, yet they denied everything publicly. When internal documents surfaced and executives were caught lying under oath, the industry lost in court and ultimately paid two hundred and six billion dollars in settlements with more than forty American states. That transformed the entire sector, which was forced to stop advertising to minors and to include health warnings on its products. The same law firms that led those cases against tobacco manufacturers, such as Lieff Cabraser and Motley Rice, are now leading the litigation against Meta, TikTok, YouTube and Snap.

But the comparison has its limits. Unlike tobacco, social media platforms are vehicles for expression, which brings the First Amendment into the equation, the constitutional guarantee of free speech. Furthermore, tobacco addiction is a physical and chemical phenomenon driven by nicotine, whereas so called social media addiction is behavioural, and scientists are still debating the nature and severity of the problem. The American Psychiatric Association does not recognise social media addiction as an official diagnosis. The companies argue they are being used as scapegoats for multifactorial issues involving family dynamics, genetic predispositions and socioeconomic factors.

Even so, the regulatory landscape is shifting rapidly. At least twenty American states passed legislation last year addressing children's use of social media. Australia banned under sixteens from social media platforms altogether. France and the United Kingdom are proposing similar measures. The United States Surgeon General has called for social media platforms to carry health warnings similar to those on cigarette packets.

For technology companies, the verdicts carry a clear message... platform design can now be audited by juries, and internal decisions about engagement can become evidence of defective design in court. This applies beyond social media. Any company building a predictive system, from a financial application to a virtual assistant, needs to consider that its algorithms may be treated as products subject to civil liability.

What to do with this information

There are different scenarios depending on who you are and how you interact with technology.

If you are a parent, now is the time to pay closer attention. It is not about taking the phone away overnight, but about understanding that the platforms were engineered to capture attention, especially from young people. Explore the parental controls that already exist. Have conversations about screen time. And keep an eye on how the legal landscape evolves, because changes to the platforms may come by force of court rulings in the coming months.

If you work in technology, the takeaway is practical. Design decisions that prioritise engagement over safety can now be challenged in court. Internal documents, chat debates within the company, tests that reveal risks and are then ignored, all of this can become evidence in a lawsuit. If your company builds digital products used by minors, the safest path is to treat safety as part of the design from day one, not as a problem to solve when the lawsuit arrives.

If you are an investor, the numbers have not spooked the market yet, as Meta's share price rise after the New Mexico verdict showed. But the trend is clear. Thousands of consolidated lawsuits are in play, with bellwether trials running through twenty twenty six. If the appeals courts uphold these verdicts and narrow the reach of Section two thirty, the legal exposure of big tech could shift to a different level entirely. This is worth watching closely.

If you are simply a social media user, the most useful piece of information is this... that feeling of not being able to put your phone down may not just be a lack of willpower. The platforms were designed to work that way. Knowing that already changes how you negotiate with yourself about the time you spend online.

And for everyone, regardless of their role in this story... the shield that protected the internet for thirty years is cracking. It has not fallen yet. The companies will appeal, higher courts will weigh in, and the outcome may take years. But the direction became clearer this week. The question is no longer whether big tech will be held accountable for the design of its products. It is when, at what scale, and who will foot the bill.

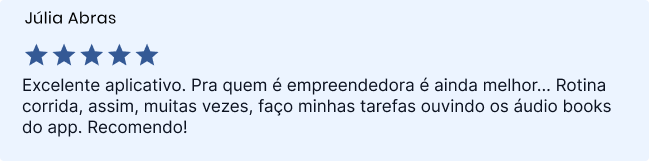

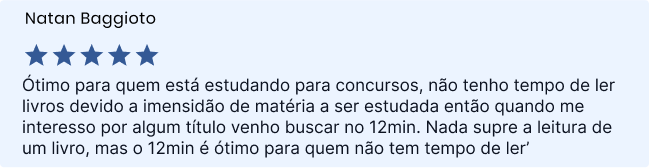

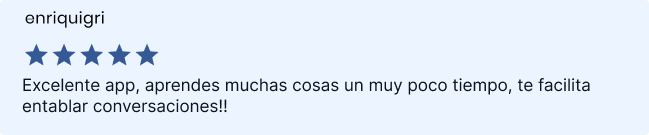

Sign up and read for free!

By signing up, you will get a free 7-day Trial to enjoy everything that 12min has to offer.